ISAACS is a general purpose framework for controlling fleets of robotic agents through immersive media. I've worked on a range of components from the project: I've designed AR and VR interfaces, built 3D mapping solutions, wrote networking and data collection modules, and adapted dynamic control planners for different types of drones. We also built a series of applications testing different features and modalities on top of the ISAACS platform, described below.

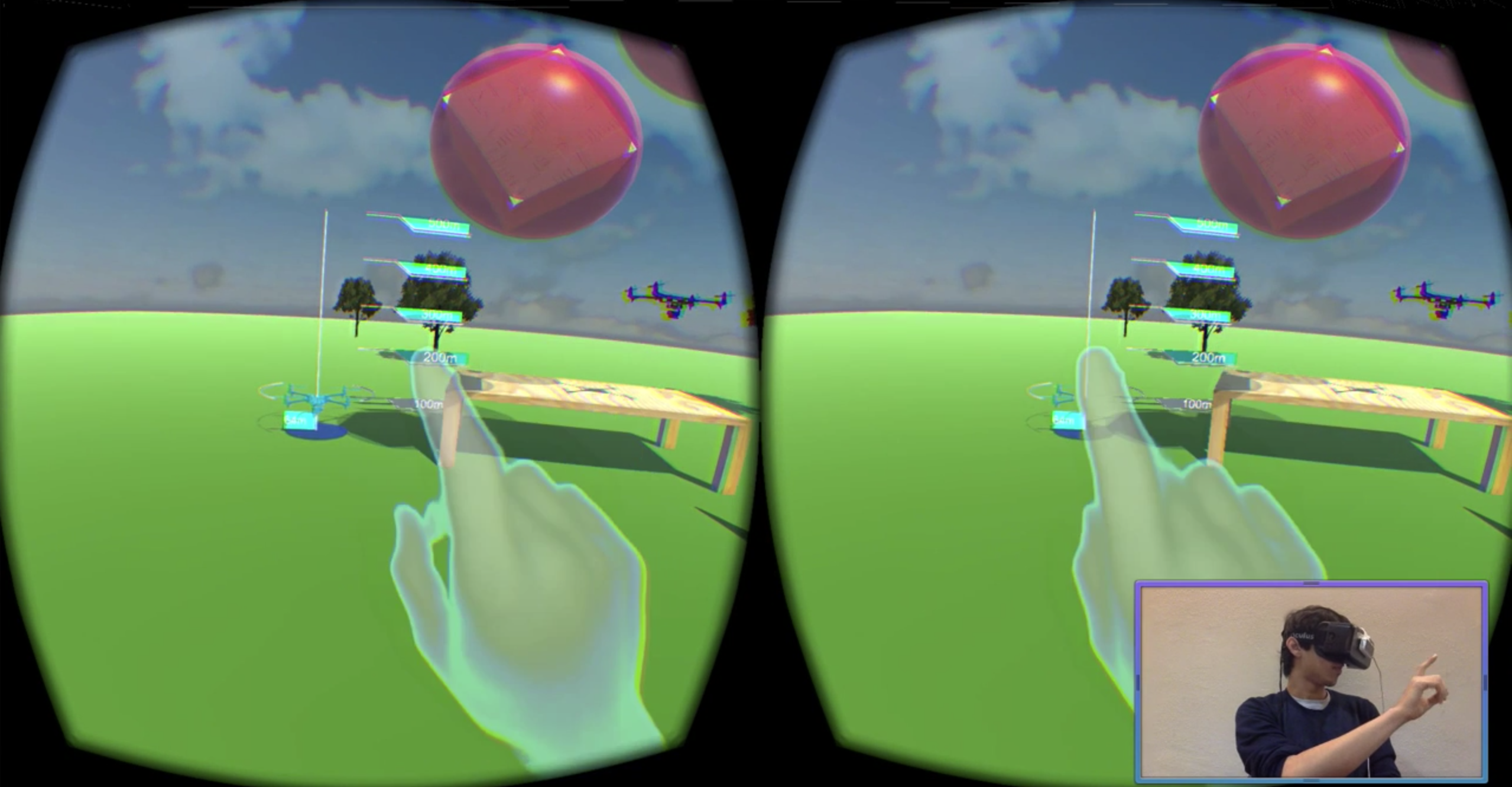

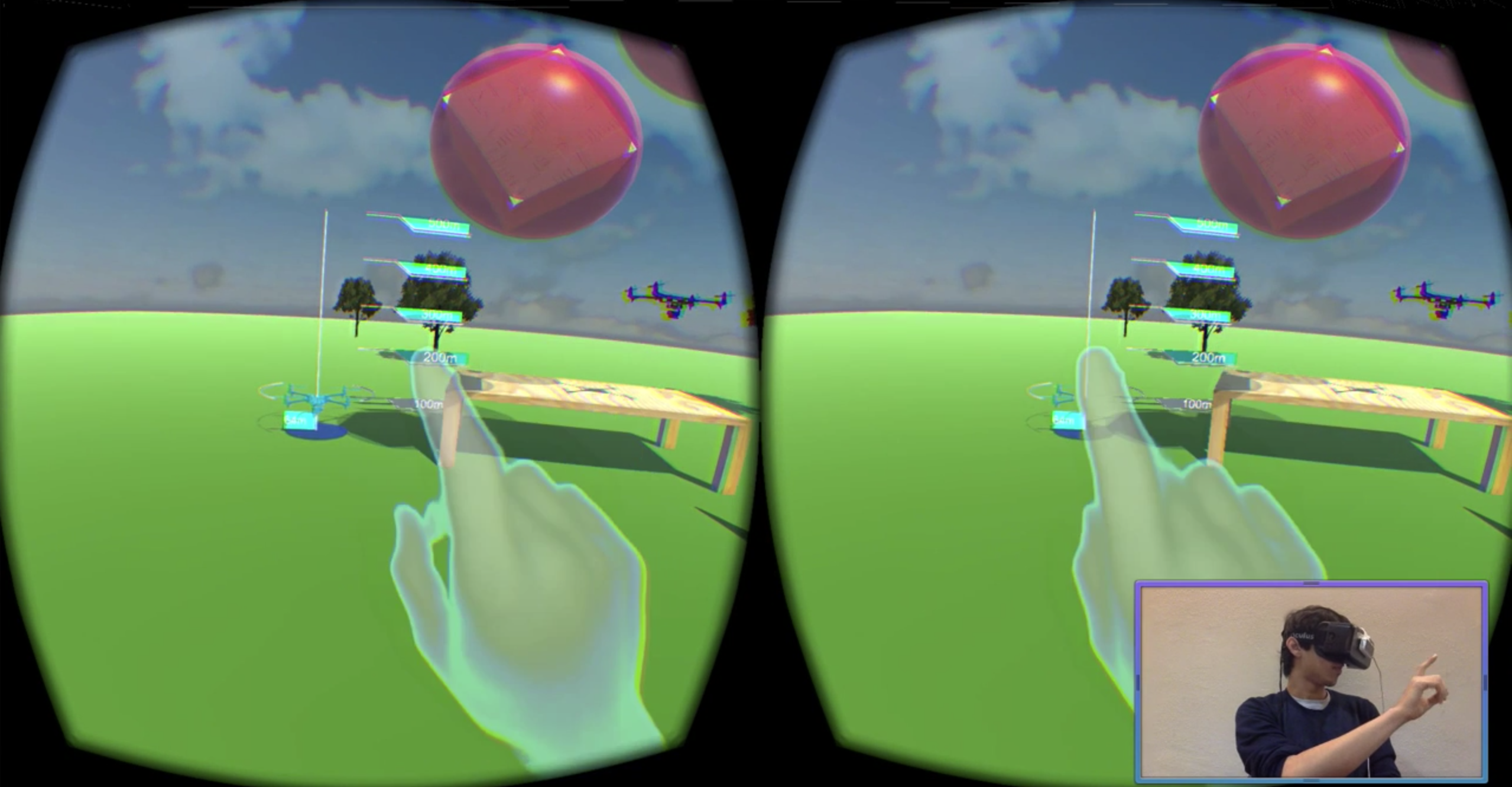

Virtual Drones in Virtual Environments: Testing obstacle avoidance, flight planning, and are obviously risky and expensive to perform with real drones. Instead, we proposed a model where novel algorithms can be deployed on virtual drones, which can be controlled inside a simulated environment. We used VR to interact with the drones in this simulation -To add and remove drones, arrange virtual obstacles, and log data. We worked to import 3D scans of real-environments and export in-sim flight paths to real drones, which are especially useful features for drone videographers.

Physical Drones in Virtual Environments: Building on the above project, we extended the simulator to connect to real, flying drones. Ender's Game style, actions in the simulator would be carried out by real-drones, which could be located anywhere in the world. We used control theory and reachability analysis to ensure that the operator's trajectories were physically feasible (and if not, correct them dynamically), and designed various ways of conveying that to the user.

UX for Virtual Environments: In designing and testing optimal interactions for the simulator, we introduced the notion of VR widgets - spatial UI elements that could be dragged into the user's virtual environment and customized pre-flight. We experimented with the notion of HUDs, cockpit overlays, and spatial interfaces, and measured motion sickness and comfort in each modality. We also experimented with LeapMotion hand-tracking for flight control, but ultimately switched back to a controller after finding that it was too inaccurate and fatiguing.

Physical Drones in Physical Environments: When the user is colocated with drones (in the same physical space), Augmented Reality can make flying them easier, safer, and more efficient. Instead of having to look down at a remote controller or smartphone app, relevant information is projected spatially in the world. Using multimodal input (voice and hand-gestures), operators can control drones at a higher level of abstraction (say "go to this point" instead of using a 6-axis controller). They can fly more than one drone at a time, which is impossible with traditional remote controllers. I deployed our SLAM solution to the drone, built the localization synchronization between the operator and the drones, and wrote the network module to pass controls and sensor data between the AR system and the drones.

Safe Real-Time Navigation of Dynamic Systems: Real time autonomous motion planning and navigation is hard, especially when we care about safety. This becomes even more difficult when we have systems with complicated dynamics, external disturbances like wind, and a priori unknown environments. PhD students in the lab worked to “robustify” existing real-time motion planners to guarantee safety during navigation of dynamic systems. I am working on improving the motion planner in their work to extend to larger, heavier drones with more complicated dynamic systems, like the DJI Matrice 100 and 600.

(Left) A standard LQR controller is unable to keep the quadrotor within the tracking error bound. (Right) The optimal tracking controller keeps the quadrotor within the tracking bound, even during radical changes in the planned trajectory.

We are using this motion planner for the waypoint following behaviours in the numerous drone control applications described above. The hope is that mixing human intelligence with artificial intelligence could create fleets of robotic agents that are safer, more efficient, and easier to manage that ones controlled entirely by humans operators (can't scale with the number of robotic agents) or entirely by AI (fails at defining meaningful goals).